1. Introduction

In today’s world, companies need to be highly agile to cope with a constantly changing and increasingly uncertain business environment while also dealing with a fast-growing combination of products, services, materials, technologies, machines, and people skills. A successful manufacturing supply chain requires orchestration, coordination, and synchronization of each of these elements operating independently and cohesively together. Now and into the future as Industry 4.0 unfolds, as computers are connected with the aim of ultimately making decisions and running operations with minimal human involvement, companies are struggling to manage these multi-faceted and complex digital transformation projects. Below are some of the key challenges stakeholders and transformation projects face in their journey to a highly agile and “smart” (low touch/no touch) manufacturing supply chain.

Understanding the current processes and constraints

Although people have been working in their factories and supply chains for many years, it is still difficult to fully understand and articulate all the processes in detail since much of the information is compartmentalized between different organizational structures within the company. Understanding starts by identifying all the physical constraints in the process of sourcing material and producing and distributing products to customers. There are also many different documents describing the business rules that management wants to apply to govern the process, often contradicting current reality. In most organizations a large amount of execution knowledge and detail decision logic is still tribal knowledge contained in the heads of people making these day-to-day decisions on the shop floor, which is very hard to replicate in any system. This knowledge is often lost as the workforce ages and people retire.

Identifying the best data sources and aggregating accurate relevant data

Understanding the current quality and correlation of data between the various enterprise systems is a huge challenge as the values for the same fields in different systems often differ, making it difficult to determine which values are correct. The level of detail between systems is different based on the application of the system making correlation and aggregation of data very complex. Synchronizing different data sources to ensure they are all time relevant (same timestamp) is a challenge as some systems are running close to real-time while others are batch-oriented running only once per day. The key to the transformation process is identifying the sources and flow of data to establish a relevant data pipeline to support process modeling, control, dashboarding and analysis.

Identify and explore areas for transformation and modernization

It is challenging to identify and assess the value of process changes and optimizations aimed at improving performance in the factory or supply chain. Certain performance or value gains are often perceived resulting in large capital investments in capacity and extensions to physical infrastructure for future growth and new products without a detailed understanding of the requirements or potential impact on the business. The same holds true for automation and digitalization initiatives intended to improve efficiency and performance as these projects are often developed in isolation. This results in projects not delivering the overall expected value and anticipated process transformation required to move the business forward on its digital transformation goals.

Accurate predictions of future behavior and performance

Transformation often involves many concurrent aspects such as people, process, equipment, new products, sales, global reach, distribution and more. Without understanding the end-to-end impact of proposed changes in business operations, including policies or processes, businesses fail to meet expectations, potentially wasting money on investments that do not deliver the expected value. Key items within a digital transformation include understanding the impact of automation, evaluating alternatives to understand the ROI of different options, visualizing and presenting future results to all stakeholders for buy-in and decision-making.

The most effective way to enable and facilitate digital transformation and address the challenges discussed above is to create a detailed simulation-based virtual model or offline Process Digital Twin of the processes/factory/supply chain/warehouse for a step-by-step design and analysis of the current and future processes, referred to as a predictive solution. Then, in time, connect the real data of the enterprise systems to the virtual model to become the online Process Digital Twin for operational deployment and near-real-time decision-making, referred to as the prescriptive solution. The underlying technology is described in more detail in the Simio Simulation Solution Whitepaper, also available from the Simio website.

This whitepaper will describe the key steps and tasks to be completed as part of the digital transformation journey. These are mapped on a high-level process step chart called the “Digital Continuum”. Many organizations would like to perform these digital and business transformation projects as fast as possible but there are multiple underlying people, process and technology challenges, that need to be addressed to ensure a successful transformation project to put the business on a new trajectory of efficiency and performance.

2. Key Digital Transformation Technology, People, Process and Data Challenges

There are various key factors, all contributing to making business and digital transformation challenging. Businesses struggle to manage their people, process, data and technology challenges in order to meet the growing demands required to thrive and compete effectively in the new VUCA (Volatility, Uncertainty, Complexity and Ambiguity) world. Some of the most important challenges and constraints are highlighted and discussed in more detail below:

- Having access to a single detailed constraint model or Process Digital Twin of the system including all the equipment, labor, tooling, transportation, and material, enables a deeper understanding of current processes. It also allows for prediction of future behavior and performance, supporting informed decisions for future investment and operations.

- Another challenge is in creating a Process Digital Twin simulation model that captures the impact of all the business rules that regulate operations such as inventory policies, labor policies, operating procedures, and transportation restrictions. These business rules are often created by management without understanding the full impact on the operations. Equally important is the ability to capture detailed day-to-day decision logic as applied by the planners, operators, and supervisors running and managing the operations day to day, otherwise known as tribal knowledge. These operational rules and decision logic are often not fully documented or transparent to the rest of the organization. This process model or Process Digital Twin needs to become the knowledge base of the operations to enable accurate replication and analysis for future predictions.

- One of the biggest challenges with any digital transformation project is the access to and delivery of the required enterprise data, both static (ERP, SCP, etc.) and dynamic (MES, IoT, etc.), with the required accuracy to support the creation of detailed data-driven process models and/or Process Digital Twins. This data is often distributed between multiple systems using different numbering and naming conventions with inconsistent data being recorded to different levels of detail for evaluation and comparison.

- People’s roles and performance incentives that motivate and direct current behavior are not always aligned with the overall objectives of the business and key business KPIs. Employee incentives are not always correctly aligned with the business objectives and often narrowed to specific functions within the overall process. These siloed objectives often work against the overall goals of the business without understanding the overall impact of localized incentive and/or compensation plans and how those might affect the overall business performance.

- Businesses are all striving to become more agile to enable them to react faster to changes in business conditions or operational environments (VUCA). Traditional solver-based optimization methods used for decision support are no longer fast and agile enough. Fast optimization with AI agents such as Deep Neural Network (DNN) and Machine Learning (ML) to support multi-objective KPIs is a key enabler for rapid replanning and optimization in near-real-time.

- For organizations to become agile and confident about execution, they need to do business planning and scheduling in a continuous fashion as a rolling plan or schedule instead of the current time-bucketed planning approach, typically in weekly buckets, currently in use by most enterprise systems. This time-bucketed approach creates a misleading sense of capacity and material feasibility as resource and material availability must be synchronized with the actual execution timeline to ensure full readiness for execution. Currently, typical resource calendar-based planning and scheduling systems, supported by most ERP and APS vendors, only assess feasibility within a calculated capacity bucket for a specific time-period, rather than ensuring true synchronization with the execution plan.

- The ability to do integrated planning across all organizational silos and relevant time ranges within the supply chain is key to avoid infeasibility when executing the plan. Planning today is typically done by different teams and software components across the planning horizon (operational, tactical & strategic) using incomplete constraints as well as optimistic capacity and material availability models. Due to the complexity, detailed scheduling is often also done in organizational silos to simplify the task and focus on specific functions causing synchronization delays between operating units when executing these schedules.

- The ability to capture the end-to-end business process and associated knowledge base in a digital replica of that process that can accurately replicate the behavior of the process over any time horizon (i.e., hours, days, months) is key to perform detailed what-if analysis and evaluations of current and future business performance, ensuring informed decision making by using a singular digital reference model.

- A process digital twin provides stakeholder access to a centralized control tower as a single source of the truth for all decision making and performance measurement. Today stakeholders across operational functions and business timelines use different applications and data sources for analysis and decision support, resulting in disconnected planning and decision making across the enterprise.

3. The Digital Continuum

Digital and/or business transformation is very complex, and companies often underestimate the total spectrum of activities and milestones to reach to be successful with their transformation initiatives. This is a systematic process, and every organization is keen to complete it as fast as possible but some of the phases require more effort and time to complete to be successful in the next phase. The phasing and time required to complete each phase is largely dependent on the current digital and process maturity of the organization. Businesses often overestimate their level of maturity and believe that their processes are well documented, and their data and systems are in a better state of accuracy and readiness than they are. This is typically discovered after doing detailed analyses of the current business processes and systems.

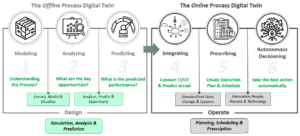

To provide organizations and digital transformation teams with some experience-based guidance, Simio produced a digital transformation roadmap that highlights the key steps and activities to be performed as part of the total transformation journey. This transformation roadmap for project phasing and execution is called the Digital Continuum and will be described at a high level as illustrated in Figure 1 below.

The six (6) steps shown in Figure 1 below serve as a guideline of what the transformation timeline and steps could look like and is by no means absolute. What is important to discuss here are the learnings and progress that need to be made to progress on this transformation journey and timeline. The actual timing will be determined by the rate at which the business can complete the key learnings and progress required at each step. This process is also focused on the development of a Process Digital Twin to replicate the current behavior of the system to analyze and evaluate current and future performance. This roadmap supports the steps to move from the “design” function (Simulation, Analysis & Prediction) to a fully integrated “operate” function (Planning, Scheduling & Prescription).

There are four (4) key transformation phases and a set of requirements that will be discussed in more detail below and shown on Figure 1 as highlighted boxes (green & gray) between steps.

Figure 1: The Digital Continuum

3.1 Extract, Model & Visualize

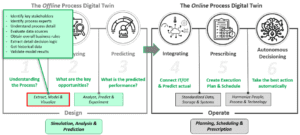

This is a critical phase that enables organizations to thoroughly assess and understand their existing business processes, review current business rules and best practices, and determine the most effective way to configure and manage their current operations. It will also help to review and evaluate their current systems and data readiness to develop a complete Process Digital Twin which will become the knowledge base and current business reference model of their business to support future process improvement or transformation initiatives. As part of the initial first two steps, “Modeling” and “Analyzing”, the project team must achieve the following key objectives to ensure ongoing success and support from the key stakeholders as discussed and shown in Figure 2 below:

Figure 2: Extract, Model & Visualize

- Identify all the key stakeholders who will directly or indirectly benefit from the results and decision-making, ensuring they are involved in the design process and can offer knowledge, support and resources moving forward.

- Identify all the primary process experts who can provide detailed process knowledge and accurately describe the process steps in sufficient detail to correctly model at the right level of detail, ensuring that the results are accurate and relevant for decision-making at all levels.

- Understand and capture all the process flows, resource and material requirements, performance requirements and current challenges. This will allow the development team to architect the Process Digital Twin and associated data model in a way to accurately represent all the processes and associated constraints.

- Identify and evaluate all the potential data sources to find the most relevant sources to use for both offline and potential online data feeds. Also identify required data that might have to be generated and maintained manually until other sources can be obtained or implemented as formal data sources for the additionally required data.

- Obtain and understand all the overall business rules as created and applied by the management team such as labor policies, inventory policies, supplier requirements, customer service metrics, safety requirements, etc. The impact of these business rules is often not fully understood and is just accepted as a requirement from the business. The Process Digital Twin then allows for detailed analysis to fully understand and potentially change some of these business rules to better serve the business needs.

- Extract detail decision logic by engaging with the supervisors and operators on the shop floor or execution management teams as these detailed decision rules are mainly experienced-based, typically not officially documented and often vary from site to site or even between departments at the same site. These detailed shop floor decisions that are made day-to-day are often not visible to the management team as they are not documented in any formal system of record, making it difficult to capture as part of the development process.

- Find and obtain good quality and sufficient historical data to use for testing and validation. Historical data forms the backbone of the testing and validation process to evaluate the Process Digital Twin’s performance against actual data from a past time-period.

- Perform detailed verification and validation of the model and results to ensure the delivery of credible results. This requires both good quality historical data and input from all processes and operational experts to evaluate both the process representation and results delivered by the Digital Twin.

3.2 Analyze, Predict & Experiment

After creating, testing and validating the Process Digital Twin as part of the previous steps, the Process Digital Twin is now ready to be used for evaluating both current and future state performance. As part of the “Analysis” and “Predicting” steps (2 & 3) the project team must achieve the following objectives to ensure ongoing success and support from the business leaders and key stakeholders as discussed and shown in Figure 3 below:

Figure 3: Analyze, Predict & Experiment

- Identify process constraints and bottle necks caused by issues such as resource and/or material availability, buffer and batch sizing, labor scheduling and more, that might be constraining the process flow and preventing the business from achieving its KPIs.

- Identify possible opportunities for improvement that might include changes to the process flows, additional equipment, better material management, layout changes, new labor schedules, automation, flow-based inventory policies, etc.

- Obtain, manage and transform the data to get it in the required form that will match the agreed Process Digital Twin template requirements for the offline model with a clear view to the online integration requirements or data pipeline to support an automated data feed moving forward.

- Perform experimentation by creating various data sets and model configurations (preferably data generated) to run scenarios for evaluation to better understand the current behavior as well as predicted future results based on different transformational initiatives or continuous improvement opportunities.

- Evaluate alternate proposals from different stakeholders and execution management teams such as new capital investment projects and process improvement opportunities to analyze the impact on overall business improvement as well as determine the ROI for each of these initiatives before committing capital and resources for implementation.

- Determine the best overall performance criteria and metrics to satisfy both the operational and financial stakeholders as well as the executive management requirements such as revenue, cost, efficiency, ROI, and customer service as some of these metrics will often compete, requiring clear business objectives to be agreed upon.

- Finalize the preferred process configuration to start delivering forward-looking predictions based on parameter changes provided by the key stakeholders such as changes in demand, new product introductions, new market sectors, labor schedules, resource and material availability, etc.

- Provide clear predictions of the expected process behavior and associated results and obtain signoffs on the desired expected future performance based on the implementation and phasing of the selected business and process changes.

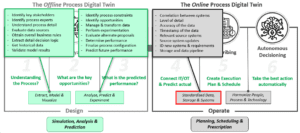

3.3 Standardized Data, Storage & Systems

Between the “Predicting” and “Integrating” steps (3 & 4), the objectives are primarily technical to accomplish the integration between the identified data sources and required development of a standardized data pipeline and storage platform to support the online Process Digital Twin. Additionally, as part of the “Integrating” and “Prescribing” steps (4 & 5), which will allow the team to plan and schedule using the Process Digital Twin in near-real-time or on demand to generate forward-looking plans and schedules for comparison or execution, the project team must accomplish the following objectives to ensure continued success and support from operations, as discussed and shown in Figure 4 below:

Figure 4: Standardize Data, Storage & Systems

- The enterprise systems landscape usually includes a variety of systems at both the corporate and operational levels, such as ERP, MES, SCP, QA, LIMS, PM, along with IoT devices and various spreadsheets used by planners and operators. These systems typically do not follow the same numbering and naming conventions and are often not integrated and synchronized. This results in significant issues with the correlation between systems resulting in conflicting data that cannot be merged into a central data source or Process Digital Twin without extensive data transformation and manipulation.

- Based on the specific system application, the data is often implemented at different levels of detail to meet each specific system’s requirements. Some production-related data might be kept at a group or family or SKU level and cannot be matched or translated into a single data source.

- It is often difficult to determine the actual accuracy of the data since the value of specific data elements, such as the production time of a specific component on a particular resource, is different between not only the ERP and MES systems including the planner-specific Excel sheets but also differs with the actual numbers used by the operators on the shop floor. The Process Digital Twin plays a significant role in determining the valid values that best match the actual measured outcomes of the physical process.

- Different enterprise systems are often updated at different timeline cadences such as daily overnight runs for the ERP system, end of shift updates for the MES systems, and near real time updates for monitoring or control systems. This results in data that is not synchronized to carry the same timestamp of the databetween systems at any given point in time when required for analysis, planning or scheduling, resulting in inaccurate results.

- Based on the level of detail and scope of the Process Digital Twin, identifying the relevant source systems to provide the most accurate and time relevant data is a key part of the process. This process can significantly minimize the level of integration and/or data transformation required to provide the Digital Twin with the required data.

- Based on data discrepancies and inaccuracies between systems, it might be required to update or enhance certain source systems to adhere to the same numbering and naming conventions or even change the level of detail for certain items or specific attribute values such as production run times or batch sizes.

- The absence of required data in the current systems will help the team to identify new systems and their specific requirements to further expand and improve the available data such as additional MES, IoT or monitoring systems. This will help to increase the accuracy, usability and processes coverage of the Process Digital Twin.

- Based on the current and planned IT infrastructure, decisions need to be finalized regarding the storage and data pipeline platform and technology. Integration and data flow can be point to point, via an intermediate staging database, or by utilizing a centralized cloud storage capability with unified name space (UNS), to name a few examples.

3.4 Harmonize People, Process & Technology

Once the Process Digital Twin is fully integrated and validated to produce the feasible plans and schedules approved by supervisors and operators, the system is ready to be used in a low-touch/no-touch mode for autonomous decision making to improve overall business agility and efficiency. As part of the “Prescribing” and “Autonomous decisioning” (“Smart Factory”) steps (5 & 6) the project team must achieve the following objectives to ensure ongoing success and buy-in from all levels of the organization as discussed and shown in Figure 5 below:

Figure 5: Harmonize People, Process & Technology

- Various departments within the organization rely on data from different systems to analyze, plan and schedule the business operations. To ensure consistent decision-making across all levels, these processes must be standardized and aligned, ensuring that decisions are based on the same accurate and time relevant data following a unified methodology.

- Often different operational units within the same factory or across factories performing the same operational tasks might follow different workflows based on the experience and background of the various operators and supervisors at each of these facilities. Using the Process Digital Twin, analysis can be performed to identify the best performing workflows (“best practices”) to standardize the workflows implemented factory or even organizational wide to allow for better overall performance, measurement, repeatability and training of personnel.

- In high performing environments where automation is becoming a key part of the agility and estimated increase in throughput, it is often difficult to understand exactly how to implement and manage each specific area of automation. The Process Digital Twin will help businesses to understand the impact of automation and how to design and integrate automation in each specific area and determine what the expected return on investment will be.

- As operational control improves, getting more accurate near-real time information is key, hence, integration to IoT enabled systems becomes a key factor in obtaining valuable status information such as tank levels, AMR locations and status of equipment to support near-real time decision-making.

- As soon as the Process Digital Twin accurately replicates the detailed behavior and decision-making of the process, it can be used to generate synthetic training data for the training of Deep Neural Networks (DNN) and/or Machine Learning (ML) agents. These agents can then be used for near-real-time optimization for both standalone applications or imbedded as a component of the Process Digital Twin. This provides a well-managed platform to train and test AI algorithms for use in the organization while fully understanding the intended behavior and application as well as the ability to retrain when circumstances change.

- As the Process Digital Twin is used to be more prescriptive and utilized for near-real-time decisions, more and more accuracy is required. One of the key points is to capture the shopfloor decisions made day-by-day by the operators and supervisors as they run and manage the operations. This allows the team to make final detailed updates to the decision logic for the Process Digital Twin to more accurately replicate operations.

- To further enable detail shopfloor input on operations, it may require the development of specific systems and MM (Man Machine) interfaces to capture data based on certain operational steps on the shop floor or as part of the execution process to obtain near-real-time data on status and progress.

- To obtain full monetization and effective use of the Digital Twin, it is important to define and distribute outputs specific to each role and stakeholder requirement. This will enable seamless decision making because of direct access to the current, synchronized and relevant data and decisions of the end-to-end systems.

4. Delivering Business Value at Each Step of the Digital Continuum

As organizations follow these steps described by the digital continuum, they should be able to generate business value, both quantitative and qualitative, at each key step of the journey. This is important to keep supporting and funding the ongoing development of the Process Digital Twin as part of the total business transformation journey. The process should address questions both in the design and investment phases of the transformation and business reengineering process, as well as for the daily operational management of the active, ongoing process. Below is some key value drivers associated with the 6 primary steps as shown in Figure 1.

4.1 Modeling

During this step, the team will gather all the required information regarding the end-to-end process flow, business rules and detailed decision logic applied on the shop floor to plan, schedule and run the factory operations. The team will also review all sources of data to identify accuracy and availability as well as identify shortcomings and missing data. The key value items to the business are as follows:

- A Process Digital Twin that captures all the physical constraints, process flows, business rules and decision logic in a single knowledge base of the end-to-end process/factory.

- Data and systems status reports to determine the level of digital maturity while also identifying specific required systems updates/fixes and even requirements for new or additional systems.

- A digital business reference model to test and evaluate any ongoing business improvement initiatives, as well as future changes or extensions that may be required by the business. This reference model becomes the single version of the truth to support data driven decision making by all stakeholders in the business.

4.2 Analyzing

Once the Process Digital Twin has been fully verified and validated, it is ready to be used for analysis of the current processes/factory/supply chain/warehouse. It is important to fully understand and maximize the performance of the current processes before making decisions about introducing new changes or making upgrades to the current process such as new equipment, automation, etc. The key value items to the business are as follows (representative values):

- 25% reduction in synchronization delays (unplanned downtime)

- 10% reduction in labor

- 20% improvement in throughput

- 20% improvement in resource efficiency

- 15% reduction in inventory and WIP

- 12% improvement in on time delivery

- 16% decrease in cost of production

- 25% reduction in manufacturing lead time

4.3 Predicting

Once the current process/factory/supply chain/warehouse has been fully analyzed and optimized for performance, the Process Digital Twin can be used to evaluate additional business improvement opportunities as well as new process/factory/supply chain/warehouse enhancements to meet future demand or evaluate new specific strategic initiatives. The key value items to the business are as follows:

- Optimize the deployment of capital by evaluating and selecting the highest ROI projects for implementation.

- Optimize the design and evaluate the overall improvement in the performance of new systems such as automation before contracting and implementation.

- Evaluate future business strategies, such as new product introductions, market expansion resulting in higher demand, or growth such as additional manufacturing capacity, to fully understand the impact on current business as well as future ROI and business performance.

4.4 Integrating

Once the Process Digital Twin is ready and validated with the agreed data model, it can be integrated into the data pipeline from the enterprise systems to initialize and run the Digital Twin with current and actual data to get the best results for any further evaluation (predictive results) or planning and scheduling (prescriptive results). The key value items to the business are as follows:

- Finalized data sources, storage and integration mechanisms to enable the data pipeline to support real time data flow in support of the Process Digital Twin and other business analysis tools.

- Revised, corrected and aligned enterprise data sources and system to ensure accurate information at the necessary level of detail. The Process Digital Twin acts as a magnifying glass to help validate and update the data to meet the requirements and standards for successful digital transformation.

- Dynamic and agile analysis and experimentation based on current data, allowing the organization to react in near-real-time to events both internal and external to the business such as changes in demand, labor issues, material supply, transportation delays and more.

4.5 Prescribing

After completing the data integration, standardization and update effort, the Digital Twin can be used to analyze, plan and schedule operations. This allows for the Process Digital Twin to be used to prescribe operations down to task level per resource (i.e., equipment, labor, transporters) as well as material requirements at each point of execution. The key value items to the business are as follows:

- Near-real-time planning and scheduling based on manual or automated triggers.

- Shopfloor-ready schedules based on current data and status of the process/factory/supply chain/warehouse to avoid or minimize any disruption to operations because of changes or events, optimizing the operations to efficiently meet demand.

- Maximizing flow (throughput of the right items) through the system/supply chain to meet demand by continuously assessing and addressing constraints in near-real-time to prevent synchronization delays, minimizing unnecessary setups and changeovers, and ensure efficient use of materials.

4.6 Autonomous Decisioning

When the Process Digital Twin is fully integrated and operational, and all employee and workflow constraints are aligned across the process/factory/supply chain/warehouse to ensure complete accuracy and feasibility, it can be connected back to the execution systems like MES for manufacturing equipment or AMR fleet managers for transporters, enabling direct orchestration of task-level execution on the shop floor. The key value items to the business are as follows:

- Complete low touch/no touch operations to maximize productivity, equipment utilization and throughput to meet demand based on the current conditions of the system (process/factory/supply chain/warehouse).

- Total control to maximize operational agility by allowing the system to react to changes in the process, demand, material availability or any other constraints directly impacting the flow of product through the system.

- Achieving the goal of the “Smart Factory” by having autonomous decision making for all resources in the system without human involvement unless selected or required based on specific condition or trigger events.

The value proposition will be different for each business depending on the project phasing, the current initiatives under review or operations under management. It will also depend on the digital and organizational maturity of the organization and its ability to transform to fully autonomous operations. Some businesses might just always require a significant level of human intervention based on the nature of their operations.

5. Conclusion

Simio provides a simulation modeling framework based on intelligent objects to optimize both the design and operation of complex systems. Simio’s key features that support the design through operation continuum include the code-free object-oriented modeling architecture, data-centric framework to support both data-driven and data-generated models, simulation and scheduling experimentation and reporting features, neural networks to optimize decisions, and enterprise deployment options for experimentation and scheduling on private and public clouds. Simio provides a comprehensive simulation platform for a complete digital transformation journey.

This journey will be different for each business based on their specific characteristics and digital maturity as well as the organizational readiness to become an agile, automated and potentially autonomous operation. The digital continuum provides a practical framework to use for guidance along this journey to help organizations and their transformation teams complete the primary steps for success and not try and fast track the process to becoming a “smart factory”

The Simio Intelligent Adaptive Process Digital Twin provides both an ideal vehicle to facilitate and support this total transformation journey from design to operate as well as well proven framework to guide the development and transformation steps to ensure success.